RTN: Zombie VCs and what that means for founders

+ DeepMinds new prediction model and huge computing clusters

The RealTech Conference is next Wednesday and tickets are sold out but if you’d like to join the waitlist then sign up here.

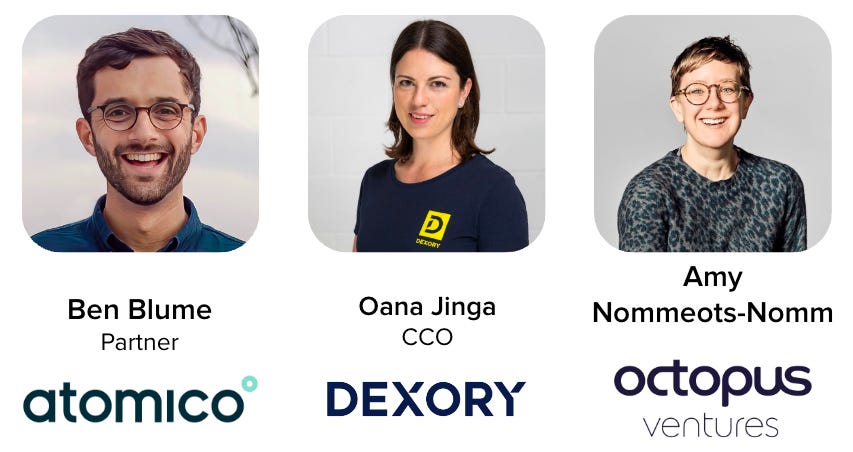

We’re hosting a panel (speakers below) on ‘Raising later-stage funding for Frontier Tech companies’

If you’re a founder of a scaled Frontier Tech company and want to help fellow founders in less than 2 minutes 🙏🏻

Please consider f…

Keep reading with a 7-day free trial

Subscribe to REALTECH News to keep reading this post and get 7 days of free access to the full post archives.